Now that the release of Drupal 8 is finally here, it is time to adapt our Drupal 7 build process to Drupal 8, while utilizing Docker. This post will take you through how we construct sites on Drupal 8 using dependency managers on top of Docker with Vagrant.

Keep a clean upstream repo

Over the past 3 or 4 years developing websites has changed dramatically with the increasing popularity of dependency management such as Composer, Bundler, npm, Bower, etc... amongst other tools. Drupal even has it's own system that can handle dependencies called Drush, albiet it is more than just a dependency manager for Drupal.

With all of these tools at our disposal, it makes it very easy to include code from other projects in our application while not storing any of that code in the application code repository. This concept dramatically changes how you would typically maintain a Drupal site, since the typical way to manage a Drupal codebase is to have the entire Drupal Docroot, including all dependencies, in the application code repository. Having everything in the docroot is fine, but you gain so much more power using dependency managers. You also lighten up the actual application codebase when you utilize dependency managers, because your repo only contains code that you wrote. There are tons of advantages to building applications this way, but I have digressed, this post is about how we utilize these tools to build Drupal sites, not an exhaustive list of why this is a good idea. Leave a comment if you want to discuss the advantages / disadvantages of this approach.

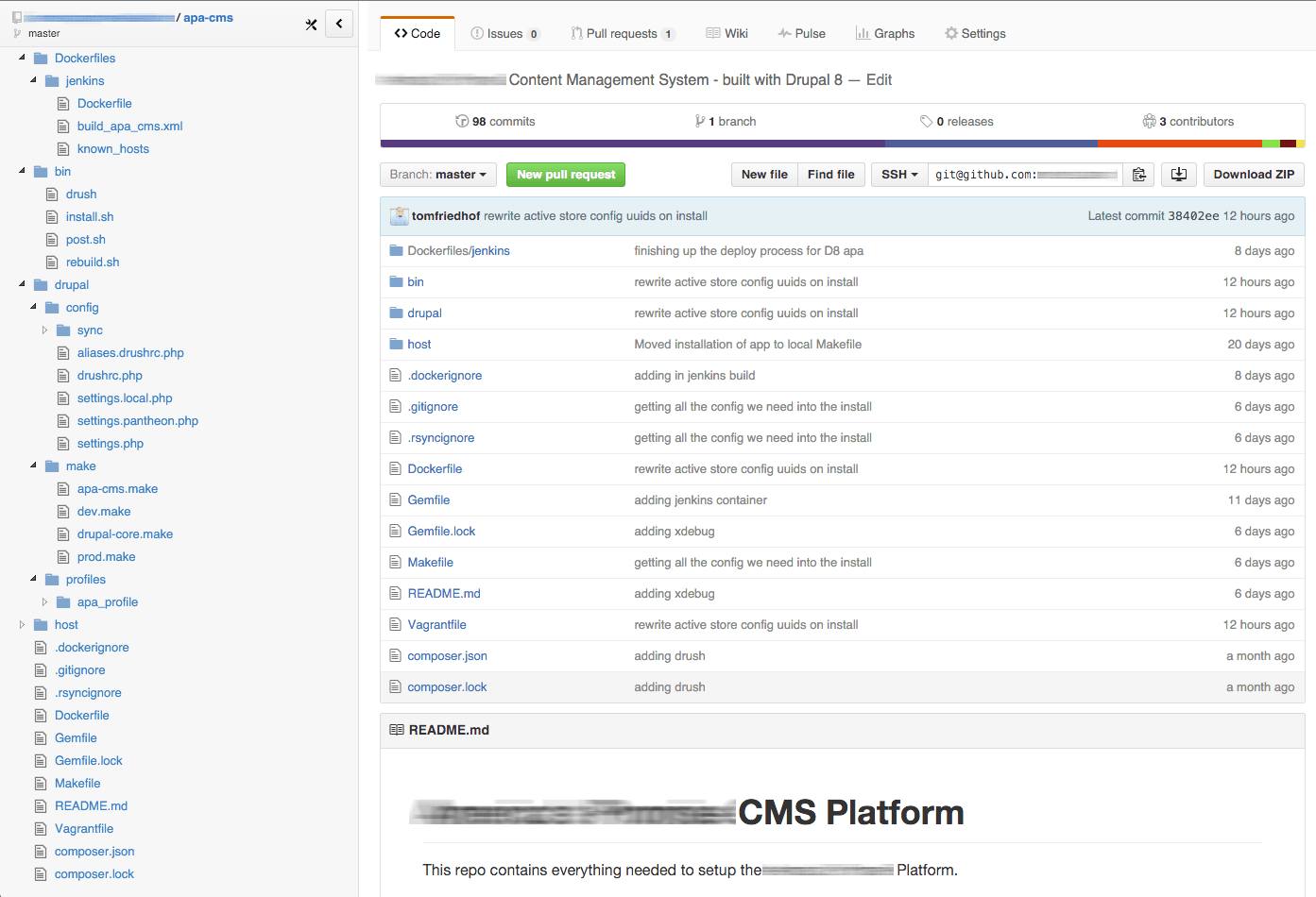

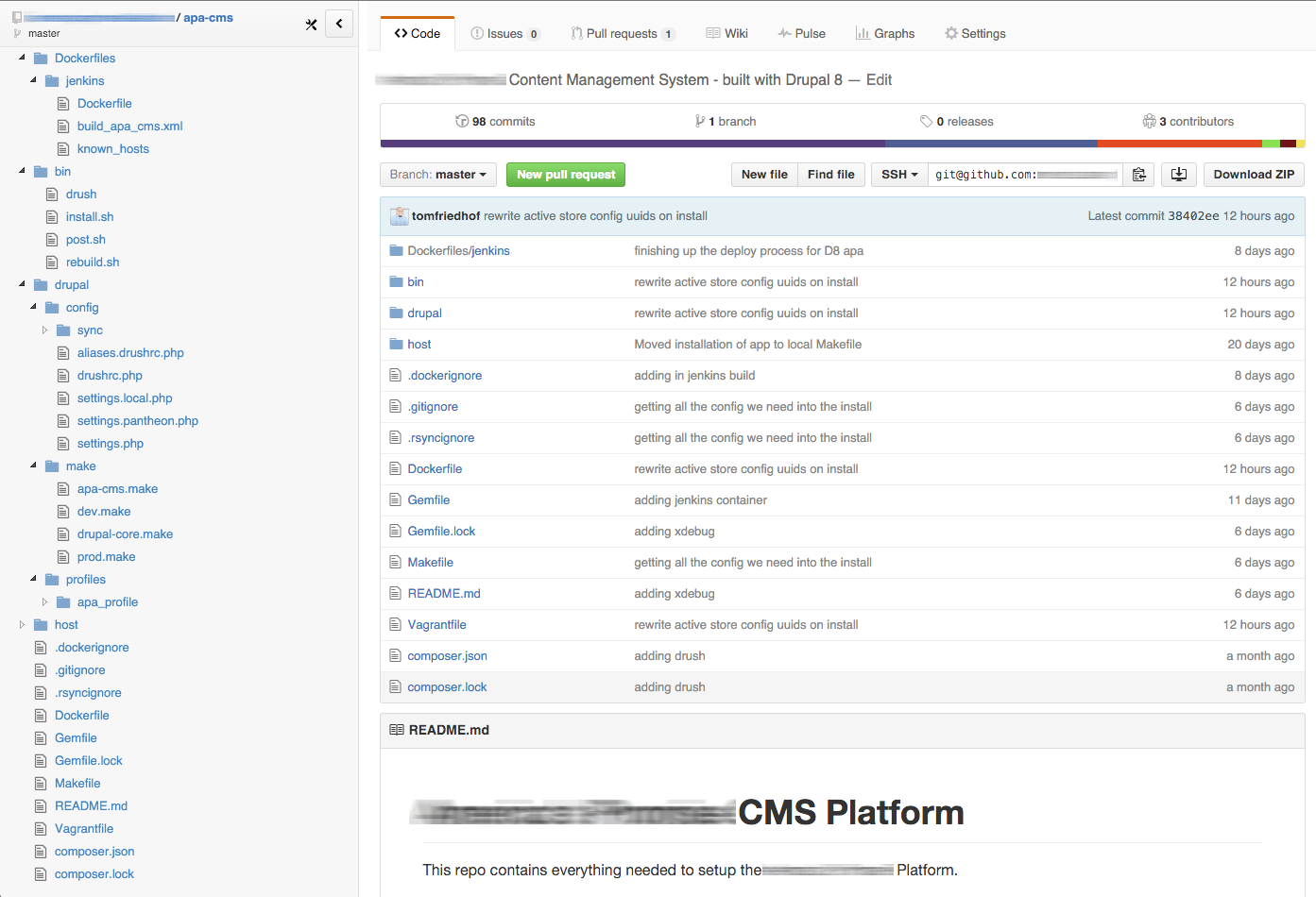

10,000 foot view of our repo

We've got a lot going on in this repository. We won't dive too deep into the weeds looking at every single file, but I will give a high level overview of how things are put together.

Installation Automation (begin with the end in mind)

The simplicity in this process is that when a new developer needs to get a local development environment for this project, they only have to execute two commands:

$ vagrant up --no-parallel

$ make install

Within minutes a new development environment is constructed with Virtualbox and Docker on the developers machine, so that they can immediately start contributing to the project. The first command boots up 3 Docker containers -- a webserver, mysql server, and jenkins server. The second command invokes Drush to build the document root within the webserver container and then installs Drupal.

We also utilize one more command to keep running within a seperate terminal window, to keep files synced from our host machine to the Drupal 8 container.

$ vagrant rsync-auto drupal8Breaking down the two installation commands

vagrant up --no-parallel

If you've read any of [my]({% post_url 2015-06-04-hashing-out-docker-workflow %}) [previous]({% post_url 2015-07-19-docker-with-vagrant %}) [posts]({% post_url 2015-09-22-local-docker-development-with-vagrant %}), I'm a fan of using Vagrant with Docker. I won't go into detail about how the environment is getting set up. You can read my previous posts on how we used Docker with Vagrant. For completeness, here is the Vagrantfile and Dockerfile that vagrant up reads to setup the environment.

Vagrantfile

# BUILD ALL WITH:

# vagrant up --no-parallel

require 'fileutils'

MYSQL_ROOT_PASSWORD="root"

unless File.exists?("keys")

Dir.mkdir("keys")

ssh_pub_key = File.readlines("#{Dir.home}/.ssh/id_rsa.pub").first.strip

File.open("keys/id_rsa.pub", 'w') { |file| file.write(ssh_pub_key) }

end

unless File.exists?("Dockerfiles/jenkins/keys")

Dir.mkdir("Dockerfiles/jenkins/keys")

FileUtils.copy("#{Dir.home}/.ssh/id_rsa", "Dockerfiles/jenkins/keys/id_rsa")

end

Vagrant.configure("2") do |config|

config.vm.define "mysql" do |v|

v.vm.provider "docker" do |d|

d.vagrant_machine = "apa-dockerhost"

d.vagrant_vagrantfile = "./host/Vagrantfile"

d.image = "mysql:5.7.9"

d.env = { :MYSQL_ROOT_PASSWORD => MYSQL_ROOT_PASSWORD }

d.name = "mysql-container"

d.remains_running = true

d.ports = [

"3306:3306"

]

end

end

config.vm.define "jenkins" do |v|

v.vm.synced_folder ".", "/srv", type: "rsync",

rsync__exclude: get_ignored_files(),

rsync__args: ["--verbose", "--archive", "--delete", "--copy-links"]

v.vm.provider "docker" do |d|

d.vagrant_machine = "apa-dockerhost"

d.vagrant_vagrantfile = "./host/Vagrantfile"

d.build_dir = "./Dockerfiles/jenkins"

d.name = "jenkins-container"

# Save the Composer cache for all containers.

d.volumes = [

"/home/rancher/.composer:/root/.composer",

"/home/rancher/.drush:/root/.drush"

]

d.remains_running = true

d.ports = [

"8080:8080"

]

end

end

config.vm.define "drupal8" do |v|

v.vm.synced_folder ".", "/srv/app", type: "rsync",

rsync__exclude: get_ignored_files(),

rsync__args: ["--verbose", "--archive", "--delete", "--copy-links"],

rsync__chown: false

v.vm.provider "docker" do |d|

d.vagrant_machine = "apa-dockerhost"

d.vagrant_vagrantfile = "./host/Vagrantfile"

d.build_dir = "."

d.name = "drupal8-container"

d.remains_running = true

# Save the Composer cache for all containers.

d.volumes = [

"/home/rancher/.composer:/root/.composer",

"/home/rancher/.drush:/root/.drush"

]

d.ports = [

"80:80",

"2222:22"

]

d.link("mysql-container:mysql")

end

end

end

def get_ignored_files()

ignore_file = ".rsyncignore"

ignore_array = []

if File.exists? ignore_file and File.readable? ignore_file

File.read(ignore_file).each_line do |line|

ignore_array << line.chomp

end

end

ignore_array

end

One of the cool things to point out that we are doing in this Vagrantfile is setting up a VOLUME for the composer and drush cache that should persist beyond the life of the container. When our application container is rebuilt we don't want to download 100MB of composer dependencies every time. By utilizing a Docker VOLUME, that folder is mounted to the actual Docker host.

Dockerfile (drupal8-container)

FROM ubuntu:trusty

# set environment variables

ENV PROJECT_ROOT /srv/app

ENV DOCUMENT_ROOT /var/www/html

ENV DRUPAL_PROFILE=apa_profile

# Install packages.

RUN apt-get update

RUN apt-get install -y \

vim \

git \

apache2 \

php-apc \

php5-fpm \

php5-cli \

php5-mysql \

php5-gd \

php5-curl \

libapache2-mod-php5 \

curl \

mysql-client \

openssh-server \

phpmyadmin \

wget \

unzip \

supervisor

RUN apt-get clean

# Install Composer.

RUN curl -sS https://getcomposer.org/installer | php

RUN mv composer.phar /usr/local/bin/composer

# Setup SSH.

RUN mkdir /root/.ssh && chmod 700 /root/.ssh && touch /root/.ssh/authorized_keys && chmod 600 /root/.ssh/authorized_keys

RUN echo 'root:root' | chpasswd

RUN sed -i 's/PermitRootLogin without-password/PermitRootLogin yes/' /etc/ssh/sshd_config

RUN mkdir /var/run/sshd && chmod 0755 /var/run/sshd

RUN mkdir -p /root/.ssh

COPY keys/id_rsa.pub /root/.ssh/authorized_keys

RUN chmod 600 /root/.ssh/authorized_keys

RUN sed 's@session\s*required\s*pam_loginuid.so@session optional pam_loginuid.so@g' -i /etc/pam.d/sshd

# Install Drush

RUN composer global require drush/drush:8.0.0-rc3

RUN ln -nsf /root/.composer/vendor/bin/drush /usr/local/bin/drush

# Move Composer cache, is put back in install.sh

RUN mv /root/.composer /tmp/

# Setup PHP.

RUN sed -i 's/display_errors = Off/display_errors = On/' /etc/php5/apache2/php.ini

RUN sed -i 's/display_errors = Off/display_errors = On/' /etc/php5/cli/php.ini

# Setup Apache.

RUN sed -i 's/AllowOverride None/AllowOverride All/' /etc/apache2/apache2.conf

RUN a2enmod rewrite

# Setup Supervisor.

RUN echo '[program:apache2]\ncommand=/bin/bash -c "source /etc/apache2/envvars && exec /usr/sbin/apache2 -DFOREGROUND"\nautorestart=true\n\n' >> /etc/supervisor/supervisord.conf

RUN echo '[program:sshd]\ncommand=/usr/sbin/sshd -D\n\n' >> /etc/supervisor/supervisord.conf

# Configure X Debug

# RUN apt-get install -y php5-xdebug

# RUN echo "\nxdebug.max_nesting_level=256\nxdebug.default_enable=1\nxdebug.remote_enable=1\nxdebug.remote_handler=dbgp\nxdebug.remote_host=192.168.100.1\nxdebug.remote_port=9000\nxdebug.remote_autostart=0" >> /etc/php5/apache2/conf.d/20-xdebug.ini

WORKDIR $PROJECT_ROOT

EXPOSE 80 22

CMD exec supervisord -nWe have xdebug commented out in the Dockerfile, but it can easily be uncommented if you need to step through code. Simply uncomment the two RUN commands and run vagrant reload drupal8

make install

We utilize a Makefile in all of our projects whether it be Drupal, nodejs, or Laravel. This is so that we have a similar way to install applications, regardless of the underlying technology that is being executed. In this case make install is executing a drush command. Below is the contents of our Makefile for this project:

all: init install

init:

vagrant up --no-parallel

install:

bin/drush @dev install.sh

rebuild:

bin/drush @dev rebuild.sh

clean:

vagrant destroy drupal8

vagrant destroy mysql

mnt:

sshfs -C -p 2222 [email protected]:/var/www/html docroot

What this commmand does is ssh into the drupal8-container, utilizing drush aliases and drush shell aliases.

install.sh

The make install command executes a file, within the drupal8-container, that looks like this:

#!/usr/bin/env bash

echo "Moving the contents of composer cache into place..."

mv /tmp/.composer/* /root/.composer/

PROJECT_ROOT=$PROJECT_ROOT DOCUMENT_ROOT=$DOCUMENT_ROOT $PROJECT_ROOT/bin/rebuild.sh

echo "Installing Drupal..."

cd $DOCUMENT_ROOT && drush si $DRUPAL_PROFILE --account-pass=admin -y

chgrp -R www-data sites/default/files

rm -rf ~/.drush/files && cp -R sites/default/files ~/.drush/

echo "Importing config from sync directory"

drush cim -yYou can see on line 6 of install.sh file that it executes a rebuild.sh file to actually build the Drupal document root utilizing Drush Make. The reason for separating the build from the install is so that you can run make rebuild without completely reinstalling the Drupal database. After the document root is built, the drush site-install apa_profile command is run to actually install the site. Notice that we are utilizing Installation Profiles for Drupal.

We utilize installation profiles so that we can define modules available for the site, as well as specify default configuration to be installed with the site.

We work hard to achieve the ability to have Drupal install with all the necessary configuration in place out of the gate. We don't want to be passing around a database to get up and running with a new site.

We utilize the Devel Generate module to create the initial content for sites while developing.

rebuild.sh

The rebuild.sh file is responsible for building the Drupal docroot:

#!/usr/bin/env bash

if [ -d "$DOCUMENT_ROOT/sites/default/files" ]

then

echo "Moving files to ~/.drush/..."

mv \$DOCUMENT_ROOT/sites/default/files /root/.drush/

fi

echo "Deleting Drupal and rebuilding..."

rm -rf \$DOCUMENT_ROOT

echo "Downloading contributed modules..."

drush make -y $PROJECT_ROOT/drupal/make/dev.make $DOCUMENT_ROOT

echo "Symlink profile..."

ln -nsf $PROJECT_ROOT/drupal/profiles/apa_profile $DOCUMENT_ROOT/profiles/apa_profile

echo "Downloading Composer Dependencies..."

cd $DOCUMENT_ROOT && php $DOCUMENT_ROOT/modules/contrib/composer_manager/scripts/init.php && composer drupal-update

echo "Moving settings.php file to $DOCUMENT_ROOT/sites/default/..."

rm -f $DOCUMENT_ROOT/sites/default/settings\*

cp $PROJECT_ROOT/drupal/config/settings.php $DOCUMENT_ROOT/sites/default/

cp $PROJECT_ROOT/drupal/config/settings.local.php $DOCUMENT_ROOT/sites/default/

ln -nsf $PROJECT_ROOT/drupal/config/sync $DOCUMENT_ROOT/sites/default/config

chown -R www-data \$PROJECT_ROOT/drupal/config/sync

if [ -d "/root/.drush/files" ]

then

cp -Rf /root/.drush/files $DOCUMENT_ROOT/sites/default/

chmod -R g+w $DOCUMENT_ROOT/sites/default/files

chgrp -R www-data sites/default/files

fi

This file essentially downloads Drupal using the dev.make drush make file. It then runs composer drupal-update to download any composer dependencies in any of the modules. We use the composer manager module to help with composer dependencies within the Drupal application.

Running the drush make dev.make includes two other Drush Make files, apa-cms.make (the application make file) and drupal-core.make. Only dev dependencies should go in dev.make. Application dependencies go into apa-cms.make. Any core patches that need to be applied go into drupal-core.make.

Our Jenkins server builds the prod.make file, instead of dev.make. Any production specific modules would go in prod.make file.

Our make files for this project look like this so far:

dev.make

core: "8.x"

api: 2

defaults:

projects:

subdir: "contrib"

includes:

- "apa-cms.make"

projects:

devel:

version: "1.x-dev"apa-cms.make

core: "8.x"

api: 2

defaults:

projects:

subdir: "contrib"

includes:

- drupal-core.make

projects:

address:

version: "1.0-beta2"

composer_manager:

version: "1.0-rc1"

config_update:

version: "1.x-dev"

ctools:

version: "3.0-alpha17"

draggableviews:

version: "1.x-dev"

ds:

version: "2.0"

features:

version: "3.0-alpha4"

field_collection:

version: "1.x-dev"

field_group:

version: "1.0-rc3"

juicebox:

version: "2.0-beta1"

layout_plugin:

version: "1.0-alpha19"

libraries:

version: "3.x-dev"

menu_link_attributes:

version: "1.0-beta1"

page_manager:

version: "1.0-alpha19"

pathauto:

type: "module"

download:

branch: "8.x-1.x"

type: "git"

url: "http://github.com/md-systems/pathauto.git"

panels:

version: "3.0-alpha19"

token:

version: "1.x-dev"

zurb_foundation:

version: "5.0-beta1"

type: "theme"

libraries:

juicebox:

download:

type: "file"

url: "https://www.dropbox.com/s/hrthl8t1r9cei5k/juicebox.zip?dl=1"

(once this project goes live, we will pin the version numbers)

drupal-core.make

core: "8.x"

api: 2

projects:

drupal:

version: 8.0.0

patch:

- https://www.drupal.org/files/issues/2611758-2.patchprod.make

core: "8.x"

api: 2

includes:

- "apa-cms.make"

projects:

apa_profile:

type: "profile"

subdir: "."

download:

type: "copy"

url: "file://./drupal/profiles/apa_profile"

Front-end Tools

At the root of our project we also have a Gemfile, specifically to install the compass compiler along with various sass libraries. We install these tools on the host machine, and "watch" those directories from the host. vagrant rsync-auto watches any changed files and rsyncs them to the drupal8-container.

bundler

From the project root, installing these dependencies and running a compass watch is simple:

$ bundle

$ bundle exec compass watch path/to/themebower

We pull in any 3rd party front-end libraries such as Foundation, Font Awesome, etc... using Bower. From within the theme directory:

\$ bower install

There are a few things we do not commit to the application repo, as a result of the above commands.

- The CSS directory

- Bower Components directory

Deploy process

As I stated earlier, we utilize Jenkins CI to build an artifact that we can deploy. Within the jenkins job that handles deploys, each of the above steps is executed, to create a document root that can be deployed. Projects that we build to work on Acquia or Pantheon actually have a build step to also push the updated artifact to their respected repositories at the host, to take advantage of the automation that Pantheon and Acquia provide.

Conclusion

Although this wasn't an exhaustive walk thru of how we structure and build sites using Drupal, it should give you a general idea of how we do it. If you have specific questions as to why we go through this entire build process just to setup Drupal, please leave a comment. I would love to continue the conversation.

Look out for a video on this topic in the next coming weeks. I covered a lot in this post, without going into much detail. The intent of this post was to give a 10,000 foot view of the process. The upcoming video on this process will get much closer to the Tarmac!

As an aside, one caveat that we did run into with setting up default configuration in our Installation Profile was with Configuration Management UUID's. You can only sync configuration between sites that are clones. We have overcome this limitation with a workaround in our installation profile. I'll leave that topic for my next blog post in a few weeks.